Secrets of the AI Ninjas: The easy way for beginners to level up their AI results

When you’re playing around with ChatGPT or Gemini for the first time, it’s easy to just toss in a quick, simple prompt and see what happens.“What can

ChatGPT’s recent image generation capabilities have challenged our previous understanding of AI-generated media. The recently announced GPT-4o model demonstrates noteworthy abilities of interpreting images with high accuracy and recreating them with viral effects, such as that inspired by Studio Ghibli. It even masters text in AI-generated images, which has previously been difficult for AI. And now, it is launching two new models capable of dissecting images for cues to gather far more information that might even fail a human glance.

OpenAI announced two new models earlier this week that take ChatGPT’s thinking abilities up a notch. Its new o3 model, which OpenAI calls its “most powerful reasoning model” improves on the existing interpretation and perception abilities, getting better at “coding, math, science, visual perception, and more,” the organization claims. Meanwhile, the o4-mini is a smaller and faster model for “cost-efficient reasoning” in the same avenues. The news follows OpenAI’s recent launch of the GPT-4.1 class of models, which brings faster processing and deeper context.

With improvements to their abilities to reason, both models can now incorporate images in their reasoning process, which makes them capable of “thinking with images,” OpenAI proclaims. With this change, both models can integrate images in their chain of thought. Going beyond basic analysis of images, the o3 and o4-mini models can investigate images more closely and even manipulate them through actions such as cropping, zooming, flipping, or enriching details to fetch any visual cues from the images that could potentially improve ChatGPT’s ability to provide solutions.

With the announcement, it is said that the models blend visual and textual reasoning, which can be integrated with other ChatGPT features such as web search, data analysis, and code generation, and is expected to become the basis for a more advanced AI agents with multimodal analysis.

Among other practical applications, you can expect to include pictures of a multitude of items, such flow charts or scribble from handwritten notes to images of real-world objects, and expect ChatGPT to have a deeper understanding for a better output, even without a descriptive text prompt. With this, OpenAI is inching closer to Google’s Gemini, which offers the impressive ability to interpret the real world through live video.

Despite bold claims, OpenAI is limiting access only to paid members, presumably to prevent its GPUs from “melting” again, as it struggles to keep up the compute demand for new reasoning features. As of now, the o3, o4-mini, and o4-mini-high models will be exclusively available to ChatGPT Plus, Pro, and Team members while Enterprise and Education tier users get it in one week’s time. Meanwhile, Free users will be able to limited access to o4-mini when they select the “Think” button in the prompt bar.

When you’re playing around with ChatGPT or Gemini for the first time, it’s easy to just toss in a quick, simple prompt and see what happens.“What can

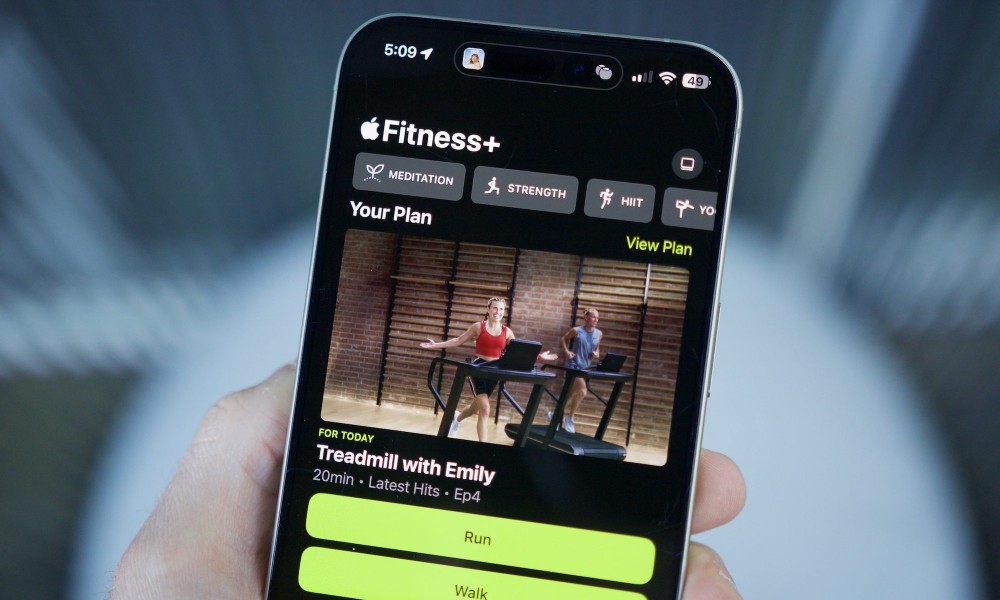

Apple’s efforts in the health segment are a class ahead of the competition. But more than just racing ahead with innovation, the company has taken a m

Snapchat is bringing generative AI videos to its social platform. The company has today introduced what it calls Video Gen AI Lenses, which essentiall

Tech for Change This story is part of Tech for Change: an ongoing series in which we shine a spotlight on positive uses of technology, and showcase ho

Microsoft is set to get a major AI update and is preparing its server capacity to support the next iteration of OpenAI’s models. As OpenAI CEO, Sam Al

You no longer need to sign in to use ChatGPT Search.“ChatGPT search is now available to everyone on chatgpt.com,” OpenAI said in a post on X announcin

Earlier today, I gave a live demo of ChatGPT as it served enlightening words on a lifestyle without nicotine vices. Two of my heavy-smoker friends saw

European UnionFake news reports and disinformation campaigns driven by generative AI pose a significant risk to causing bank runs, according to a new

We are a comprehensive and trusted information platform dedicated to delivering high-quality content across a wide range of topics, including society, technology, business, health, culture, and entertainment.

From breaking news to in-depth reports, we adhere to the principles of accuracy and diverse perspectives, helping readers find clarity and reliability in today’s fast-paced information landscape.

Our goal is to be a dependable source of knowledge for every reader—making information not only accessible but truly trustworthy. Looking ahead, we will continue to enhance our content and services, connecting the world and delivering value.